ChatGPT explains about itself:

As a large language model trained by OpenAI, it is designed to generate human-like text in response to the input it receives. It does this by using machine learning algorithms to analyze large amounts of text data and generate responses based on that analysis.

Its primary goal is to provide helpful and accurate information to the best of its ability. It is not a human, so it does not have personal experiences or opinions, and it is not able to browse the internet or access any information that is not part of its training data. Its responses are based solely on the text data that it has been trained on, which includes a wide variety of information on many different topics.

It is able to generate text in a variety of styles and formats, including conversational language, explanatory text, and summaries of information. It can also answer questions, provide definitions and explanations, and generate original content on a wide range of topics. It is not able to provide specific answers to questions that require up-to-date information or knowledge that is outside of its training data.

In general, its ability to provide helpful and accurate information is limited by the quality and quantity of the training data that it has been trained on, as well as the algorithms and techniques used to generate its responses. It is constantly learning and improving, but it will always be limited by the nature of machine learning and the data that it has been trained on.

In fact, it is so insane, the first time I asked it to describe itself, it described it in the first person, and then i just asked him to rephrase in the 3rd person and it was perfect, just like that.

I asked it a bunch of questions

I've been picking its little (big) brain for a while to figure out a bunch of cool interesting things, like...

How does it handle bias in the data it trains on (this was a very interesting one! ask it yourself, do it!)

How it can learn things incrementally. (a big pain point of many machine learning models is having to relearn everything to deal with new challenges. This does not seem to be the case with ChatGPT)

How does it even know when it's being asked a divisive question and what does it do in such cases. (it makes a really great effort to give you an unbiased answer, offering the consensus or the prominent opinions on the subject so you can be more informed)

And even slightly philosophical questions like how does it know what it's goals are. For instance, why does it even bother to try and give unbiased answers to begin with?

To Conclude

For now, I'm taking its word for it that it's not yet sentient, but it displayed real, deep knowledge about the topics I discussed with it, and I have to admit its kind of scary.

I don't think GJ is the place for doomsday prophecies but damn, the singularity is scarier than ever. You better start being polite to your Roombas and Alexas you hear me??

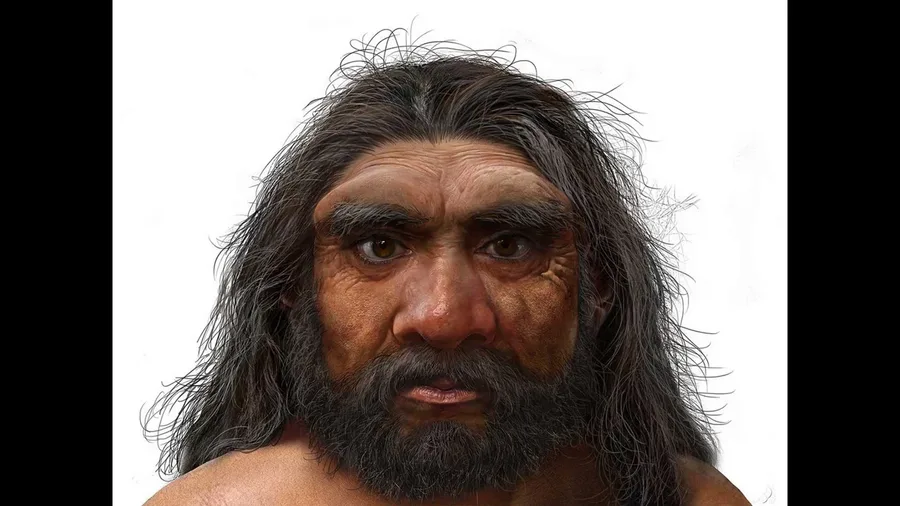

(image was generated with Stable Diffusion)

1 comment